Try it online with Colab Notebooks!¶

All the following examples can be executed online using Google colab notebooks:

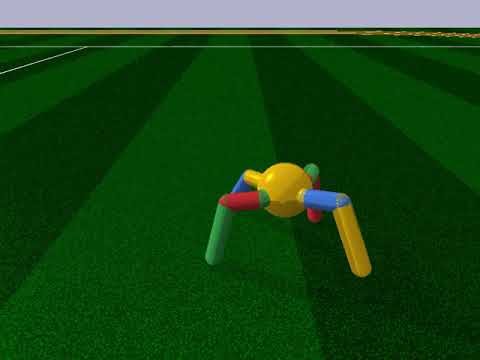

Pybullet gripper email protected league for Pepper, and the Robocup soccer for NAO). Custom built environment using Pybullet 2 simulations of cubic crate filled with up to 10 randomly stacked items 96 item classes 1, no duplicates per crate Objects pulled upwards sequentially from crate Collision checking 1 prevents object removal attempts involving gripper-object collisions. PyBullet is a library designed to provide Python bindings to the lower level C-API of Bullet. We will use PyBullet to design our own OpenAI Gym environments. The Issue tracker was flooded with support questions and is closed until it is cleaned up. Use the PyBullet forums to discuss with others. It is highly recommended to use PyBullet Python bindings for improved support for robotics, reinforcement learning and VR. Use pip install pybullet and checkout the PyBullet Quickstart Guide. Special thanks to Ryan Hickman for valuable managerial support, Ivan Krasin and Stefan Welker for fruitful technical discussions, Brandon Hurd and Julian Salazar and Sean Snyder for hardware support, Chad Richards and Jason Freidenfelds for helpful feedback on writing, Erwin Coumans for advice on PyBullet, Laura Graesser for video narration, and Regina Hickman for photography. New in Bullet 2.85: pybullet Python bindings, improved support for robotics and VR. Use pip install pybullet and checkout the PyBullet Quickstart Guide. If you use PyBullet.

Basic Usage: Training, Saving, Loading¶

In the following example, we will train, save and load a DQN model on the Lunar Lander environment.

Note

LunarLander requires the python package

box2d.You can install it using aptinstallswig and then pipinstallbox2dbox2d-kengzMultiprocessing: Unleashing the Power of Vectorized Environments¶

Using Callback: Monitoring Training¶

You can define a custom callback function that will be called inside the agent.This could be useful when you want to monitor training, for instance display livelearning curves in Tensorboard (or in Visdom) or save the best agent.If your callback returns False, training is aborted early.

Atari Games¶

Pong Environment¶

Training a RL agent on Atari games is straightforward thanks to

make_atari_env helper function.It will do all the preprocessingand multiprocessing for you.Pybullet

PyBullet: Normalizing input features¶

Normalizing input features may be essential to successful training of an RL agent(by default, images are scaled but not other types of input),for instance when training on PyBullet environments. For that, a wrapper exists andwill compute a running average and standard deviation of input features (it can do the same for rewards).

Note

you need to install pybullet with

pipinstallpybulletHindsight Experience Replay (HER)¶

For this example, we are using Highway-Env by @eleurent.

The parking env is a goal-conditioned continuous control task, in which the vehicle must park in a given space with the appropriate heading.

Note

The hyperparameters in the following example were optimized for that environment.

Learning Rate Schedule¶

All algorithms allow you to pass a learning rate schedule that takes as input the current progress remaining (from 1 to 0).

PPO’s clip_range` parameter also accepts such schedule.The RL Zoo already includeslinear and constant schedules.

Advanced Saving and Loading¶

In this example, we show how to use some advanced features of Stable-Baselines3 (SB3):how to easily create a test environment to evaluate an agent periodically,use a policy independently from a model (and how to save it, load it) and save/load a replay buffer.

By default, the replay buffer is not saved when calling

model.save(), in order to save space on the disk (a replay buffer can be up to several GB when using images).However, SB3 provides a save_replay_buffer() and load_replay_buffer() method to save it separately.Stable-Baselines3 automatic creation of an environment for evaluation.For that, you only need to specify

create_eval_env=True when passing the Gym ID of the environment while creating the agent.Behind the scene, SB3 uses an EvalCallback.Note Channel logo creator.

For training model after loading it, we recommend loading the replay buffer to ensure stable learning (for off-policy algorithms).You also need to pass

reset_num_timesteps=True to learn function which initializes the environmentand agent for training if a new environment was created since saving the model.Making your Digital logo is easy with BrandCrowd Logo Maker Create a professional digital logo in minutes with our free digital logo maker. BrandCrowd logo maker is easy to use and allows you full customization to get the digital logo you want! Pick a digital logo. Digital logo maker. Free logo maker Designing a logo doesn’t have to be daunting. Canva's logo maker provides all of the ingredients you need to create a custom logo, fast – and free.

Accessing and modifying model parameters¶

You can access model’s parameters via

load_parameters and get_parameters functions,or via model.policy.state_dict() (and load_state_dict()),which use dictionaries that map variable names to PyTorch tensors.These functions are useful when you need to e.g. evaluate large set of models with same network structure,visualize different layers of the network or modify parameters manually. Lastpass authenticator login.

Policies also offers a simple way to save/load weights as a NumPy vector, using

parameters_to_vector()and load_from_vector() method.Following example demonstrates reading parameters, modifying some of them and loading them to modelby implementing evolution strategy (es)for solving the

CartPole-v1 environment. The initial guess for parameters is obtained by runningA2C policy gradient updates on the model.SB3 and ProcgenEnv¶

Some environments like Procgen already produce a vectorizedenvironment (see discussion in issue #314). In order to use it with SB3, you must wrap it in a

VecMonitor wrapper which will also allowto keep track of the agent progress.Record a Video¶

Record a mp4 video (here using a random agent).

Note

Pybullet_robots_master

It requires

ffmpeg or avconv to be installed on the machine.Bonus: Make a GIF of a Trained Agent¶

Pybullet Vs Mujoco

Note

Pybullet

For Atari games, you need to use a screen recorder such as Kazam.And then convert the video using ffmpeg